The PyCon US 2026 Packaging Summit ran Friday May 15, 2026, from 1:45 PM to 5:45 PM in Room 201A of the Long Beach Convention Center. Three talks, nine lightning talks, six roundtable discussions. Organized by Pradyun Gedam , C.A.M. Gerlach , and Jannis Leidel . This recap is for anyone who could not be in the room.

TLDR:

- Emma Smith’s revised PEP 777 (Wheel 2.0) is a minimal change with sub-PEPs layered on top; the first sub-PEP proposes Zstandard compression for ~25% smaller wheels.

- Mike Fiedler brought three PyPI abuse vectors (persistent state, open-ended releases, PyPI as a CDN), framed by 3× growth against 1× resources (~24,000 new packages per month, up from ~8,000) and a single full-time PyPI safety and security engineer.

- Mahe Iram Khan argued conda and pip are two parallel ecosystems, not two competing tools.

- Lightning talks: PEP 772 Packaging Council approved (Barry Warsaw), mobile wheel pipeline live (Malcolm Smith), Coherent + Compile-to-Flit (Jason Coombs), wheel variants for AI accelerators and shared malware scanning (Joongi Kim), conda-pypi (Daniel Holth), Nebi (Dharhas Pothina).

- Roundtables: PEP 803 abi3t , Wheel 2.0 hardening, cross-platform builds, CVE propagation, and nab (Damian Shaw’s pure-Python resolver aimed at pip swap-in).

- Involved in Python packaging? Consider running for the Packaging Council this fall or becoming a voting PSF member to nominate and vote.

For background, here are the packaging-topic PEPs resolved in the year leading up to the summit (January 2025 through May 2026), newest first.

Accepted or Final:

- PEP 772 — Packaging Council governance process , Accepted 2026-04-16

- PEP 783 — Emscripten Packaging , Accepted 2026-04-06

- PEP 815 — Deprecate RECORD.jws and RECORD.p7s , Final 2026-01-28

- PEP 794 — Import Name Metadata , Accepted 2025-09-05

- PEP 792 — Project status markers in the simple index , Final 2025-07-08

- PEP 770 — Improving measurability of Python packages with SBOM , Accepted 2025-04-11

- PEP 751 — A file format to record Python dependencies for installation reproducibility , Final 2025-03-31

- PEP 739 — build-details.json 1.0, a static description file for Python build details , Accepted 2025-02-05

Rejected or Withdrawn:

- PEP 708 — Extending the Repository API to Mitigate Dependency Confusion Attacks , Rejected 2026-04-02 after three years provisionally accepted, because the required implementation conditions (PyPI UI, second-repository support in pip with positive user feedback) were never met

- PEP 763 — Limiting deletions on PyPI , Withdrawn 2025-09-21; the conclusion was that PEPs are not the appropriate venue for changes to PyPI’s deletion or usage policies

- PEP 759 — External Wheel Hosting , Withdrawn 2025-01-31; the community preferred richer multi-index priority and trust controls (pointing toward PEP 766 ) over external hosting

Several of these came up during the summit and are referenced inline below.

Welcome Link to heading

The three organizers opened the summit by sharing the HackMD notes document for collaborative live notes, and by reminding the room that this is where conversations start; proposals move from here into discuss.python.org and the PEP process. The deeper official notes from the summit are the canonical source for anything I left out of this recap.

Revisiting Wheel 2.0 and Better Compression — Emma Smith Link to heading

Emma Smith

(emmatyping.dev

) authored

PEP 777, “How to Re-invent the Wheel”

and is a CPython core developer. Slides:

Google Slides deck

.

The prerequisite stdlib work is also hers: she authored

PEP 784 (Adding Zstandard to the standard library)

and then, in her

Decompression is up to 30% faster in CPython 3.15

post (November 2025), wrote up the

PyBytesWriter-based decompression rewrite that made zstd decompression 25–30% faster and zlib decompression 10–15%

faster for payloads of at least 1 MiB. That work is what makes a Zstandard-based Wheel 2.0 practical, though wide

adoption waits until Python 3.13 reaches end-of-life in October 2029

, when

3.14+ becomes the oldest supported interpreter and installers can rely on compression.zstd being present everywhere.

The earlier version of PEP 777 added a wheel-version field to the filename so existing installers would skip Wheel 2.0

outputs. Three objections came back from the community: updates would silently stop on old installers, the existing

wheel-compatibility schema was felt to be sufficient, and the pip install ./wheel_file.whl path was not covered. Emma

Smith worked through this with Donald Stufft

and landed on a different plan.

The Python packaging ecosystem is too distributed to migrate coherently, so any all-at-once approach has too many

downsides. The revised PEP 777 makes the minimum change: wheels stay zip files, and the only hard requirement is that

dist-info contains a Wheel-Version entry stored or DEFLATE-compressed inside the wheel. Each package index (PyPI

itself, internal mirrors like Artifactory

, conda-forge

wheel builds, alternative indices like Anaconda.org

and devpi

) decides

when to start accepting Wheel 2.0 uploads. Each further change rides on top as its own sub-PEP, individually motivated,

and the full PEP 777 plus its sub-PEPs get accepted or rejected together.

flowchart TB classDef gate fill:#FEE2E2,stroke:#B91C1C,color:#7F1D1D; classDef parent fill:#DBEAFE,stroke:#1E40AF,color:#1E3A8A; classDef child fill:#DCFCE7,stroke:#15803D,color:#14532D; classDef future fill:#E5E7EB,stroke:#4B5563,color:#1F2937; G[All-or-nothing acceptance gate<br/>PEP 777 + every sub-PEP voted as one bundle]:::gate G --> P[PEP 777 — Wheel 2.0 minimal change<br/>Wheel-Version key in dist-info<br/>Data-Format key for the container]:::parent P --> S1[Sub-PEP — Zstandard compression<br/>data.tar.zst inside the outer ZIP]:::child P --> S2[Future sub-PEP — container hardening<br/>tighten allowed ZIP and tar feature set]:::future P --> S3[Future sub-PEP — symlinks, ZIP64,<br/>other follow-on changes]:::future

The first sub-PEP is Zstandard compression. Emma analyzed the top 1,000 most-downloaded projects on PyPI:

- About 25% smaller wheels on average.

- About 100 PB of bandwidth saved across just those 1,000 projects.

- About 36 years of cumulative decompression time saved per month.

A constraint shaped the rollout: pure-Python installers, including pip, prefer to avoid C dependencies. Emma landed

Zstandard in the standard library, which shipped in Python 3.14 as

compression.zstd

. The wheel proposal stores non-metadata

files in a data.tar.zst archive, uncompressed inside the outer zip, and adds a Data-Format key to the wheel metadata

so future container formats can swap in.

flowchart TB

classDef meta fill:#FEF3C7,stroke:#B45309,color:#78350F;

classDef proj fill:#DCFCE7,stroke:#15803D,color:#14532D;

subgraph WHL["mypackage-1.0-py3-none-any.whl — outer ZIP"]

direction TB

subgraph DI["mypackage-1.0.dist-info/ — DEFLATE-compressed entries"]

direction LR

W[WHEEL]:::meta

M[METADATA]:::meta

R[RECORD]:::meta

end

subgraph TAR["data.tar.zst — STORED in the ZIP, no double compression"]

direction LR

PL[purelib/]:::proj

PLT[platlib/]:::proj

SC[scripts/]:::proj

DT[data/]:::proj

end

end

Threads from the Q&A:

- Why not zstd-compressed entries inside the zip? Per-file compression always loses to whole-archive compression, and per-file zstd would create Python-version compatibility issues when installing into an older interpreter’s environment.

- Compression-differential attacks. Mike Fiedler raised this. The tentative answer is to verify the declared decompressed size in the zstd header against the actual size, which matches what scanners already do.

- Stored entries. uv reads selected zip entries as stored so it can mmap them. Metadata stays accessible the same way; other files go through one extra tar-extraction step.

- Symlinks. Tar supports them; zip mostly does not. Emma’s instinct is to keep symlinks out for now to avoid the escape-the-environment surface.

- Reproducibility. Zstd at the same version and compression level is deterministic; an embedded checksum at the end

of the stream can be disabled, and Emma agreed it could move into

RECORD. - Timeline. Provisional acceptance within a year or two of writing, no upper bound on when an index can flip the switch. Emma thinks five years is a reasonable expectation for general adoption.

Daniel Holth added context from conda-package-streaming : tar inside zip streams cleanly through zstd decompression and tar unpacking, whereas a hypothetical inner zip would force decompressing the whole inner archive before reading any member.

Limiting vectors and incentives for abuse — Mike Fiedler Link to heading

Mike Fiedler (miketheman.net ) is the Safety and Security Engineer at the PSF, working full-time on PyPI. Slides: PDF .

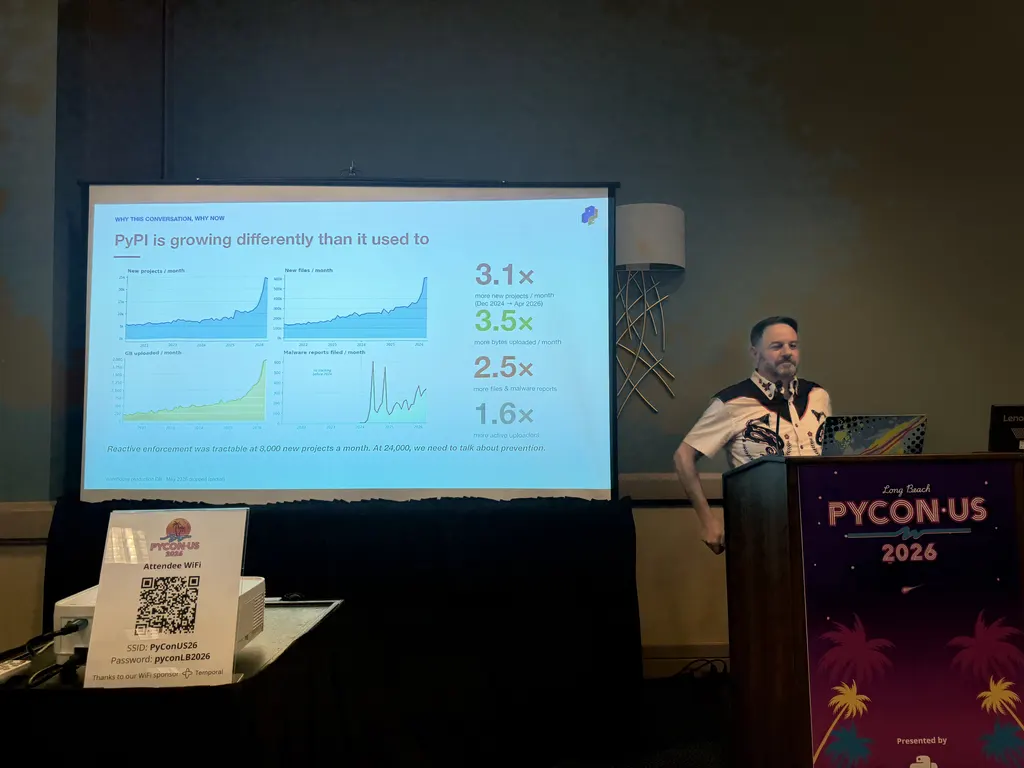

He opened with the growth chart above: between December 2024 and April 2026, PyPI saw 3.1× more new projects per month, 3.5× more bytes per month, 2.5× more files and malware reports, and 1.6× more active uploaders. Monthly new-project count moved from around 8,000 (manageable through reactive moderation) to around 24,000. PyPI holds about 36 TB of live storage and roughly the same again in cold storage of deleted files.

The recurring theme: load on every axis grew roughly 3×, while resources stayed at 1×. Physical infrastructure scales with money. The human side does not scale that fast; Mike is still the only full-time PyPI-specific safety and security engineer, supported by a network of volunteer reporters and by Seth Larson (PSF Security Developer-in-Residence) on the broader Python ecosystem. Some support tickets sit in longer queues than they used to, and reactive moderation alone no longer keeps up with the volume. Hence the prevention work below.

Recent work on the PyPI side:

- Rate limits tightened from 40 new projects per hour to 4 new projects per day per uploader.

- Trusted publishing adoption rose from about 10% to about 30% of new file uploads year over year.

- Project archival lifecycle status (advisory; installers do not act on it yet).

- Pending-publisher cleanup after 30 days of disuse.

- A growing prohibited-name list on the admin side.

The three vectors Mike wanted to discuss:

1. Persistent state Link to heading

Mike’s framing: PyPI is a warehouse, and people leave things in the warehouse. Deletions today move files to cold

storage but the content stays accessible by URL at files.pythonhosted.org. Attackers know this and use the trusted

hostname to push “install from this URL” links after the index entry has been removed. People also upload non-package

content (MP3 collections, ebooks, one project that used PyPI as Dropbox), which has to be removed for legal reasons.

Mike’s questions: should the cold-storage layer eventually be garbage-collected; should users be allowed to delete or should that move to admin-only; should the quota system stay tied to user-driven deletion when those deletions do not actually free space.

In the discussion I suggested a two-phase delete: mark for deletion, then actually delete after one to six months. Mike noted the copyrighted-material case where the legal obligation rules out a long grace window.

Related sub-thread: unused API tokens. PyPI has over a million user accounts and many of those accounts have provisioned API tokens that never got used. The room agreed PyPI should notify and then delete unused tokens, and that the trusted-publishing setup flow should offer to delete the corresponding API token at the same time. A few voices flagged the migration cost: applying this to existing tokens without an opt-in path could break CI that was set up and forgotten years ago.

2. Open-ended releases Link to heading

A pinned version is not always frozen content. A publisher today can upload new files (post-releases, additional wheels)

to an existing release after the fact, and ==1.0.0 will pick them up. Mike asked whether to lock releases after a

window (he suggested seven days) and ask publishers to issue post-releases instead.

The discussion landed on a tension. Post-releases are useful for back-filling new-Python or new-architecture wheels months after a release, and the room wanted to keep that capability available. Two ideas surfaced (any change here would need PEP 440 (Version Identification and Dependency Specification) amended too, not only installer behaviour):

- Stale-release upload as an opt-in. Today it works by default; flip it so the publisher has to re-open the release in the UI, with extra security checks.

- Cooldown windows. Make a freshly uploaded file scannable but invisible to installers for a day, so malware scanners get a head start.

The bigger change is PEP 694, Upload 2.0 API for Python Package Indexes , which would give publishers staged uploads and atomic publish, and would tie into a sealed-release state.

Emma noted that ==1.0.0 already pulls any matching local versions and dot-zero variants, so any change to

upload-after-release semantics needs the version-specifier spec amended, not just installer behavior.

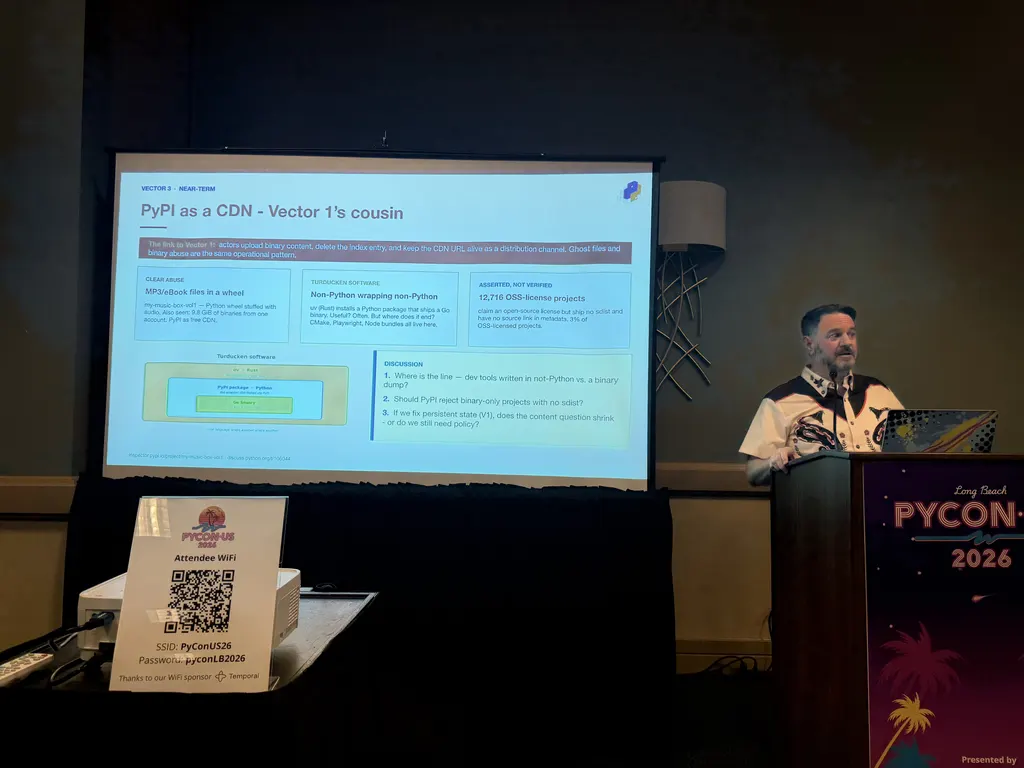

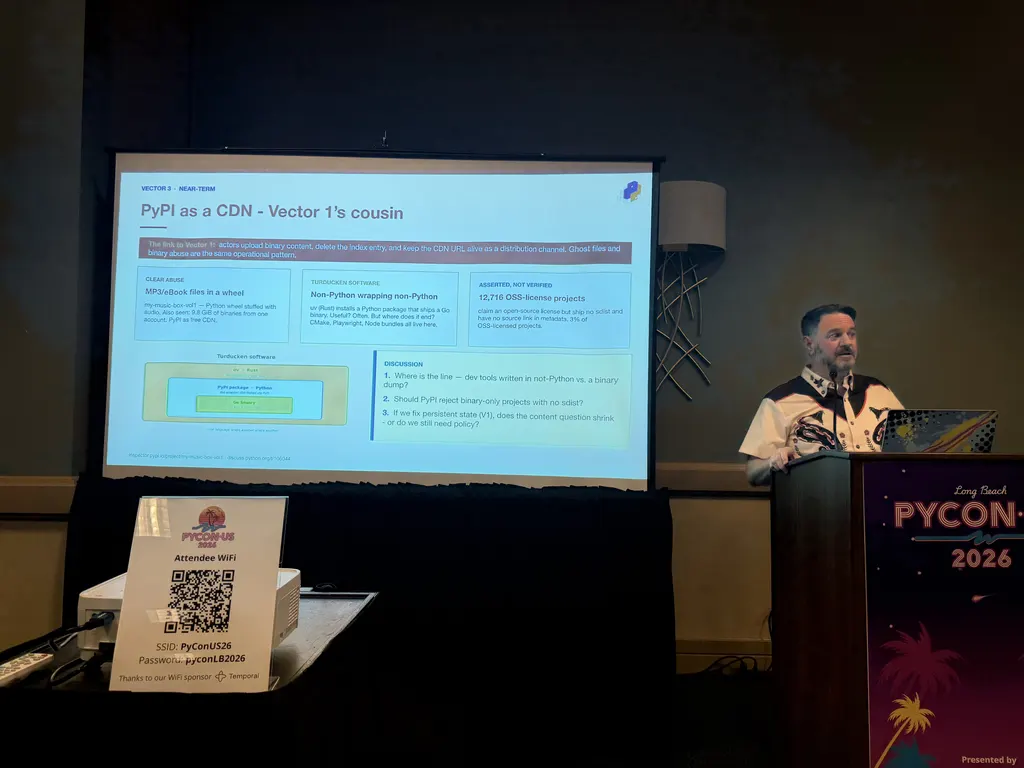

3. PyPI as a CDN Link to heading

The vector Mike called Turducken Software: a Rust binary installs a Python package that ships a Go binary that invokes

a Zig compiler. PyPI now hosts Java JARs, Node node_modules directories, and complete language runtimes from people

who use it as a general content-distribution network. The slide noted 12,716 projects that declare an OSS license but

ship neither an sdist nor a source-repository link in metadata, around 3% of the OSS-licensed set.

The open question: where does the community want PyPI to draw the line between “Python package that bundles a binary” and “not a Python package at all”? Mike asked the room to start thinking about it rather than proposing a policy himself.

He flagged namespaces as a fourth topic that would take four hours on its own, and set it aside for another day.

Encouraging the community to view Packaging in terms of ecosystems, not tools — Mahe Iram Khan Link to heading

Mahe Iram Khan is a software engineer at Anaconda based in Berlin and has maintained conda for about four years. The talk grew out of three questions she keeps getting asked: what is the difference between conda and pip, when should you use one or the other, and can you mix them.

Her thesis: conda and pip are best understood as two parallel ecosystems with different origins, not as two competing tools. The history sketch:

distutilsshipped in the standard library with no dependency management, no uninstall, and a release cadence tied to CPython.setuptoolsandeasy_install(2004) added dependency handling and PyPI integration.pipandvirtualenv(2008) added uninstall, better error messages, and resolved the day-to-day pain points for most of the community.conda(2012) was built for the scientific Python community, whose heavy non-Python dependencies the pip-era tooling could not handle. It was language-agnostic from day one and combined package and environment management in a single tool.

flowchart LR classDef stdlib fill:#E5E7EB,stroke:#4B5563,color:#1F2937; classDef pip fill:#DBEAFE,stroke:#1E40AF,color:#1E3A8A; classDef conda fill:#DCFCE7,stroke:#15803D,color:#14532D; D[distutils<br/>stdlib<br/>2000-2023]:::stdlib --> S[setuptools<br/>+ easy_install<br/>2004]:::pip S --> P[pip + virtualenv<br/>2008]:::pip S -.scientific Python<br/>needs.-> C[conda<br/>2012<br/>language-agnostic]:::conda P --> UV[poetry 2018<br/>uv 2024<br/>modern pip-ecosystem]:::pip C --> M[mamba 2019<br/>pixi 2023<br/>modern conda-ecosystem]:::conda

Later tools fall into one ecosystem or the other: poetry and uv into the pip ecosystem, mamba and pixi into the conda ecosystem. Comparing them across ecosystems produces a lot of the confusion users hit when they read packaging advice online.

Mahe acknowledged the lines are blurry and that this is a personal sense-making frame, not a formal theory. With a packaging council on the way (see Barry’s lightning talk below), she asked the room how to give new users a clearer on-ramp.

The discussion ran longer than the talk. Threads worth keeping:

- Vendor-neutral on-ramp. Deborah Nicholson (PSF) noted there is no “right way in” on python.org for newcomers to packaging today and offered PSF funding for someone to build one. The current set of links was added piecemeal over the years.

- More than two ecosystems. A consultant pointed out that Ecosyste.ms treats every distribution channel (PyPI, conda, Debian, Nix, Spack, Homebrew) as a separate ecosystem with different metadata semantics. Security advisories published on a PyPI package do not propagate to a conda or Nix repackaging of the same code, which leaves real CVE-coverage gaps that translation layers between ecosystems would close.

- The scientific-community frame. A long exchange on two framings: can wheels eventually solve the scientific use case (multiple compiled languages, hardware variance), or does the conda model exist because PyPI cannot? Ralf Gommers ’ pypackaging-native was cited as the reference on this. The wheel-next working group’s effort on PEP 817 wheel variants and PEP 825 is the visible bridge being built between the two ecosystems.

- Publishing-side experience. As an author of a pure-Python CLI tool, you ship to PyPI and you are done; the package showing up in Homebrew three months later is somebody else’s work. Getting more involved in the various places your work is republished is a specialist skill the community does not document well.

Lightning talks Link to heading

PEP 772 Packaging Council update — Barry Warsaw Link to heading

PEP 772 (Packaging Council) is fully approved. Barry Warsaw walked through the elections plan: aligned with the PSF board election in the fall, three two-week phases (nominations, voting), two cohorts on a rotating two-year cycle, PSF membership required to nominate or be nominated. Deborah Nicholson called out the spread-the-word problem: not everyone interested is in this room. Barry encouraged everyone present to consider running.

Mobile packaging update — Malcolm Smith Link to heading

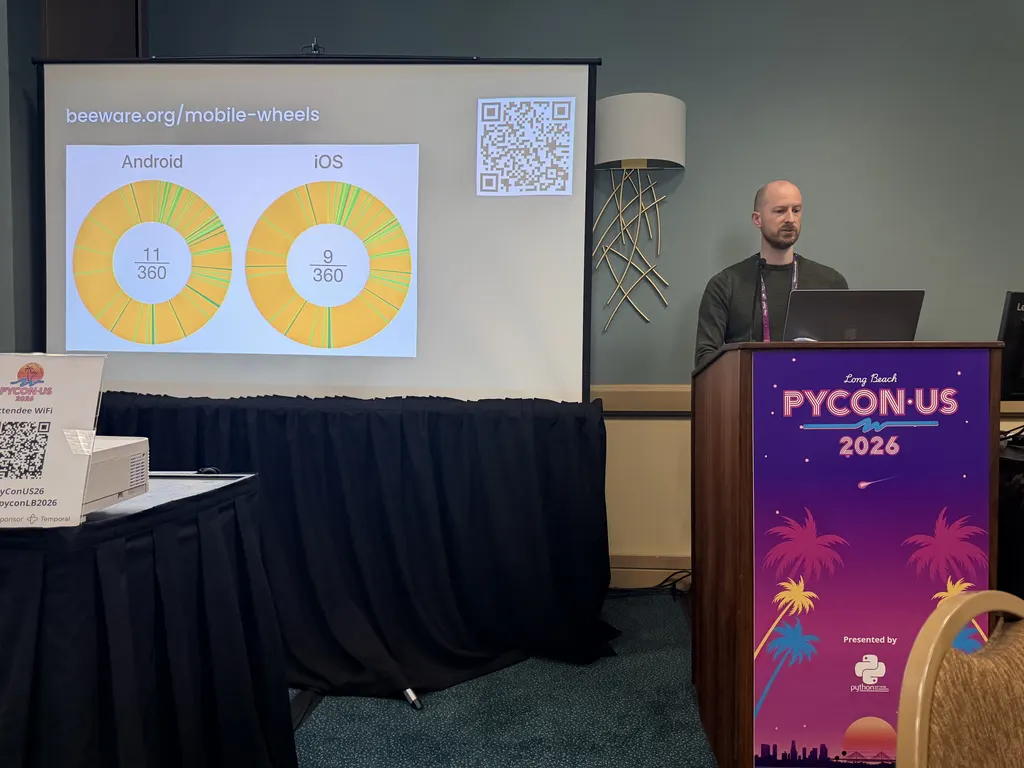

A year on from last summit, Malcolm Smith reported the build / host / install pipeline is in place. cibuildwheel supports Android and iOS, PyPI accepts the new wheel tags, and the Python installer ships official support. Kivy joined BeeWare in supporting mobile this year. The dashboard above shows 11/360 Android wheels and 9/360 iOS wheels across the top packages, mostly user-request driven. The BeeWare team is at the sprints Monday and Tuesday and happy to help maintainers add mobile support.

Live tracker: beeware.org/mobile-wheels .

Coherent System and Compile-to-Flit bootstrapping — Jason Coombs Link to heading

Jason Coombs

(jaraco.com

, GitHub:

@jaraco

) maintains over 100 packages and built the

Coherent build system

to keep that volume sustainable. Highlights: automatic

dependency inference from imports (overridable but mostly correct by default), automatic author and version inference, a

lightweight pyproject.toml, and a Flit

-based build that keeps the whole thing minimal.

Dependency inference draws on a MongoDB

database derived from PyPI that maps imports back to

projects.

The companion trick is Compile-to-Flit, Jason’s answer to the bootstrap problem for build backends with their own

dependencies. Setuptools

solves the problem by vendoring; Flit solves it by having no

dependencies; Hatch

uses backend-path. Compile-to-Flit takes a backend with N dependencies

and produces an sdist whose pyproject.toml declares only flit_core, with the metadata concretized at sdist build

time. Coherent itself has 15 dependencies and would be unworkable to vendor; with Compile-to-Flit the sdist stays tiny.

The known trade-off: users can no longer pip install the project straight from a git checkout and need to consume the

published sdist instead. Jason is willing to live with that constraint, and is planning to apply the same treatment to

Setuptools so it can have proper dependencies again.

Wheel variants for AI accelerators, and shared malware scanning — Joongi Kim Link to heading

Joongi Kim (GitHub: @achimnol ; CTO at Lablup , creator of Backend.AI ) gave back-to-back lightning talks on two parallel proposals.

The first

(slides

)

extends the wheel-variants idea (PEP 817

) into container ecosystems. Today

docker pull

auto-selects by architecture but not by

hardware feature, and

Kubernetes’ Dynamic Resource Allocation

makes users configure GPU and vendor-specific matching by hand. Joongi targets not just

NVIDIA CUDA

SM architectures but also Rebellions

and

Furiosa

NPUs, and pointed at Backend.AI’s Sokovan

scheduler as an existing implementation of per-node variant providers feeding into per-workload variant labels. The

design lets compute nodes report their properties to the scheduler, which matches them against container-image labels

equivalent to the wheel-variant properties pip would resolve.

The second (slides ) proposed a way to share malware-scan results across providers. Lablup mirrors all of PyPI (up to roughly 40 TB, doubled since 2024) for their Reservoir mirror and runs monthly scans with Ahnlab V3 and ClamAV . The proposal is a signed “scanned by X on date Y” metadata field exposed via the index API, modelled on Google’s Assured OSS , so no single team has to keep up with the upload volume alone. Sign-up happened on-site.

conda-pypi reusing the pip stack — Daniel Holth Link to heading

Daniel Holth walked through conda-pypi . Slides: dholth.github.io/presentation-conda-pypi-internals . The integration builds on unearth , pypa/installer , and pypa/build , and threads build requirements through the standard backend interfaces in PEP 517 (A build-system independent format for source trees) and PEP 660 (Editable installs for pyproject.toml based builds) .

flowchart LR classDef input fill:#DBEAFE,stroke:#1E40AF,color:#1E3A8A; classDef step fill:#FEF3C7,stroke:#B45309,color:#78350F; classDef output fill:#DCFCE7,stroke:#15803D,color:#14532D; PT[pyproject.toml]:::input --> BR[Extract build requires<br/>PEP 517 / PEP 660]:::step BR --> CI[Install build requires<br/>via conda not pip]:::step CI --> RB["Run the project's backend<br/>(setuptools / flit / hatchling)"]:::step RB --> W[Wheel artefact]:::step W --> CP[Wrap as conda package<br/>editable installs supported]:::output

Daniel noted that build backends like setuptools, flit, and hatchling arriving from PyPI in a mixed conda + pip environment is a common source of subtle issues; the conda-pypi flow keeps those backends on the conda side. Editable installs work end-to-end. pip runs inside the same Python environment it targets; conda runs in a separate one. The integration handles the bridge without manual intervention.

Nebi — environment management for teams — Dharhas Pothina Link to heading

github.com/nebari-dev/nebi ; web entry point at nebi.nebari.dev . Dharhas Pothina (GitHub: @dharhas ; OpenTeams ) presented a successor to conda-store for teams whose environments live outside the application code. Nebi is a server plus CLI built on top of Pixi that adds version history and rollback, OCI-registry distribution (Quay , GHCR ), role-based access control, and named workspace activation. Lock files and spec files are versioned together. The long-term goal is reproducible environments for science.

Roundtable discussions Link to heading

The roundtables ran in parallel. These notes come from the rooms I was in plus the shared notes for the others.

PEP 803 — abi3t Stable ABI for free-threaded Python — Petr Viktorin Link to heading

Petr Viktorin

(encukou.cz

) walked through

PEP 803 (abi3t)

, the Stable ABI for free-threaded CPython. The resulting wheel

filename looks like mypackage-0.1-cp315-abi3.abi3t-manylinux1_x86_64.manylinux_2_5_x86_64.whl. The new bit is the

compound ABI tag abi3.abi3t, which says the wheel offers the Stable ABI for both the GIL build and the free-threaded

build of CPython 3.15.

flowchart LR classDef name fill:#DBEAFE,stroke:#1E40AF,color:#1E3A8A; classDef ver fill:#FEF3C7,stroke:#B45309,color:#78350F; classDef py fill:#DCFCE7,stroke:#15803D,color:#14532D; classDef abi fill:#FEE2E2,stroke:#B91C1C,color:#7F1D1D; classDef plat fill:#E5E7EB,stroke:#4B5563,color:#1F2937; N[mypackage<br/>distribution name]:::name --> V[0.1<br/>version]:::ver V --> Py[cp315<br/>Python tag — CPython 3.15]:::py Py --> A[abi3.abi3t<br/>compound ABI tag<br/>GIL Stable ABI + free-threaded Stable ABI]:::abi A --> Pl[manylinux1_x86_64.manylinux_2_5_x86_64<br/>platform tags]:::plat

Extension suffixes are .abi3t.so on Linux and macOS (with .so as a workaround for the 3.13–3.14 window if you want

both) and .pyd on Windows. pip already supports installing abi3t wheels. Other installers need to accept the abi3t

tag for free-threaded CPython in the same places they accept abi3 today, and build tools need to opt in when they want

to expose the feature.

Yoda conditions in PEP 508 markers Link to heading

The marker grammar in PEP 508

and the

dependency specifiers spec

is

underspecified for “Yoda-form” expressions ('3.13.*' == python_full_version), and the

packaging

library does not expose the parsed marker tree as a public API. The room

agreed an editorial PR against the spec should clarify that markers always compare an environment variable on the left

with a literal on the right, mention the complexity that lets implementations be loose, and add a failing example in the

tests. uv does not implement '3.13.*' == python_full_version. The ~= operator is also confusing in this position.

Wheel 2.0 and Zstandard container hardening Link to heading

Picking up from Emma’s talk. Concrete suggestions for tightening what a valid Wheel 2.0 container looks like:

- Restrict the zip and tar feature set. Parser-differential attacks have come from GNU tar versus POSIX tar extensions, both of which most unpackers tolerate. A Wheel 2.0 sub-PEP should pick one and ban the other, and ideally specify the outer zip’s binary layout exactly (it only ever holds two regular files with specific compression methods).

- No hard links, no device files, no resource forks or NTFS streams, no xattrs.

- ZIP64 for large wheels. The choice between requiring ZIP64 unconditionally or only above a size threshold needs to stay compatible with what major build tools can produce.

data.tar.zstorwheel-filename.data.tar.zst. Daniel Holth’s preference: keep the existing.dataconvention so two wheels can be unpacked side-by-side without collision, and list only the tar archive inRECORD(not its members), matching existing rules.- Symlinks. Tar supports them. The question of whether Wheel 2.0 should allow them inside the data archive stayed open; the simplest path is to keep them out.

- Self-describing format key. Emma agreed the

Data-Formatfield should name the container too (tar-zstdrather thanzstd), so a later sub-PEP can switch the inner archive format cleanly.

Cross-platform environments and wheel building Link to heading

Android, iOS, and Emscripten each landed their own cross-platform wheel-building solution, with significant overlap

between the three. They all rely on a .pth file that monkey-patches sysconfig and other stdlib modules to simulate

running on the target platform, because most Python build systems still have no concept of cross-compilation. Even for

established platforms, cross-compilation matters when CI for the target architecture is limited (Apple Silicon before CI

vendors caught up; Windows on ARM today). The longer-term path is for build backends

(setuptools

, meson-python

,

hatchling

) to gain native cross-compilation support driven from the Python environment.

cibuildwheel does this for the platforms it recognizes.

Improving security metadata across ecosystems Link to heading

CVEs and malware are different problems. The open work is propagating CVE coverage across repackagings of the same project, where each ecosystem has its own naming and version conventions and the NVD , OSV , and GHSA mappings cover only part of the surface.

A single project gets repackaged across many ecosystems, each with its own name and version style:

| Ecosystem | Example package name for requests 2.32.0 |

|---|---|

| PyPI | requests 2.32.0 |

| conda-forge | requests 2.32.0 |

| Debian | python3-requests 2.32.0-1 |

| Homebrew | python-requests 2.32.0 |

| Nix | python3.13-requests-2.32.0 |

A CVE filed against the PyPI name auto-matches the PyPI entry and nothing else; ecosystem-translation work fills the

gap. PEP 804 (External dependency registry and name mapping)

is the closest

in-flight proposal; conda recipes can already declare an upstream purl

.

Other notes from the discussion: connect packages via source-repository URL, distinguish patch builds that change build

recipes from patches that change source code, and consider how SLSA

-style provenance carries

through dependency chains. Cooldown periods on releases have the opposite cost: they delay vulnerability discovery if

widely adopted.

Dependency resolution and nab — Damian Shaw Link to heading

github.com/notatallshaw/nab

. Damian Shaw

(GitHub: @notatallshaw

) is a pip maintainer. nab is a pure-Python dependency resolver

based on PubGrub

, with the medium-term goal of becoming a drop-in swap

for pip’s current resolver and an available library for other tools. It fixes bugs that uv inherits from the

pubgrub-rs

library, and performance on uncached resolves is comparable to uv.

PubGrub

is a dependency-resolution algorithm developed by

Natalie Weizenbaum

for the Dart package manager

. When the

resolver hits an incompatibility it derives a generalized cause and records it as a permanent fact so the same conflict

cannot resurface, which both prunes the search space and produces actionable error messages that name the conflicting

constraints. In the Python ecosystem, uv

uses PubGrub via the

pubgrub-rs

Rust crate; pip and PDM

instead use

resolvelib

, which is a backtracking resolver rather than a PubGrub-style one.

Today nab is structured as four pluggable layers: nab-resolver (the PubGrub-based resolver core), nab-python

(Python-specific resolution), nab-index (PyPI-style index access), and the top-level nab package that wires the

three together into the CLI.

flowchart TB classDef cli fill:#DBEAFE,stroke:#1E40AF,color:#1E3A8A; classDef lib fill:#DCFCE7,stroke:#15803D,color:#14532D; CLI[nab — CLI / orchestrator<br/>reads pyproject.toml, writes pylock.toml]:::cli CLI --> R[nab-resolver<br/>PubGrub resolver core]:::lib CLI --> P[nab-python<br/>Python-specific resolution<br/>markers, extras, requirements]:::lib CLI --> I[nab-index<br/>PyPI-style index access<br/>HTTP transport, metadata fetch]:::lib

As a secondary use case it can also emit PEP 751

lockfiles and leaves installation

to existing tools; it ships a security-first build policy switch (never / build-local (default) / build-remote)

for builds during resolution.

First impression after sitting with the project: nab is impressive for a tool built in about two months. The resolver design is solid and performance is competitive with uv on uncached resolves. The lightning-talk slot was an introduction; the roundtable focused on what would still need to land before nab could become a pip-side resolver alternative, with the lockfile output a useful side benefit.

My own interest in nab is the pylock.toml generation. I have spent the last few months trying to land PEP 751 lockfile

output in two other places, uv#14728

and

pip-tools#2380

, and both stalled for different reasons. A fresh tool

focused on resolution plus PEP 751 output therefore appeals to me. Damian and I worked through the gaps together during

the roundtable; I

filed them as tracking issues against the repo

afterwards. They are the ones that would need to close before I could adopt nab as a drop-in solution for that workflow:

- Universal-mode coverage. Cross-platform lockfiles cannot include PyPy alongside CPython, and ad-hoc cross-platform

locks need a

pyproject.tomledit because there is no CLI flag for the matrix. - CLI ergonomics. Common flags from pip and uv (

--constraint,--pre,--upgrade-package,--verbose/--quiet) are not yet present, and there is no progress feedback or diff summary across re-locks. - PEP 751 portability. Reproducibility gaps include missing

SOURCE_DATE_EPOCHsupport,file://URLs that are not rewritten to relative paths in the lockfile, and an unpopulatedpackages.dependenciesgraph. - Resolution stability. No seed-pins from a prior lockfile means unrelated packages can churn on re-lock; some dependency-group conflict messages do not name which groups disagree.

- Networking in corporate environments. Both transports skip proxy environment variables and the OS certificate store, which keeps nab from running behind TLS-intercepting proxies.

- Docs. The current flat guides directory would benefit from restructuring along the Diataxis framework .

The project moves quickly. Within roughly 24 hours of filing the issues, Damian had already merged fixes covering lockfile portability, PEP 751 spec conformance, the security gaps around auth credentials in lockfile URLs, the networking issues, and several CLI ergonomics fixes: PRs #41, #42, and #43 closed sixteen tracking issues in one batch.

Wrapping Up Link to heading

The format ran tight to schedule. The roundtables let attendees pick a thread and follow it across several ecosystems in one afternoon. As someone who has attended every Packaging Summit since 2019, this year felt like the best one yet. There was a lot of laughter, eager collaboration across project boundaries, and the audience keeps growing year over year. With the Packaging Council coming online this fall, that pattern should accelerate.

If you are heavily involved in Python packaging, please consider running for a seat on the council this fall. If you have an opinion but not the bandwidth to run, the lighter lift is to become a voting PSF member so you can nominate candidates and vote in the election. Two paths qualify: Supporting membership ($99/year, with a sliding-scale option) or self-certified Contributing membership (free, granted to people who dedicate at least five hours per month volunteering on work that advances the PSF’s mission, such as maintaining open-source projects, organizing Python events, or serving on a PSF working group).

Thank you to the organizers, to every speaker for putting their work up for discussion, and to everyone who jumped into the deletions, post-release, and ecosystem-bridge conversations in the room.